Chat Won't Kill SaaS. Here's What Will.

Microsoft's CEO declared SaaS dead and agents the future. He's right about the destination. He's wrong about the vehicle.

Note: when this piece says “SaaS,” it means the thing users see — tab-based applications with structured views, workflows, and governed controls — not the subscription pricing model.

In December 2024, Satya Nadella told a podcast audience that “SaaS is dead.”1 Business logic would move to an AI layer. Instead of hopping between CRM, project management, and accounting tabs, you’d tell an agent what outcome you want. The agent handles the rest.

Fourteen months later, here’s what that future looks like: a procurement lead asks their Copilot “Show me all open orders with delivery delays.” The response arrives as a paragraph. Three vendor names, two date ranges, a suggestion to “review the attached data.” No table. No sort. No drill-down. No approval button. The procurement lead opens Excel. Every time.

Chat-only didn’t kill SaaS. It revealed why SaaS still exists.

Where chat wins

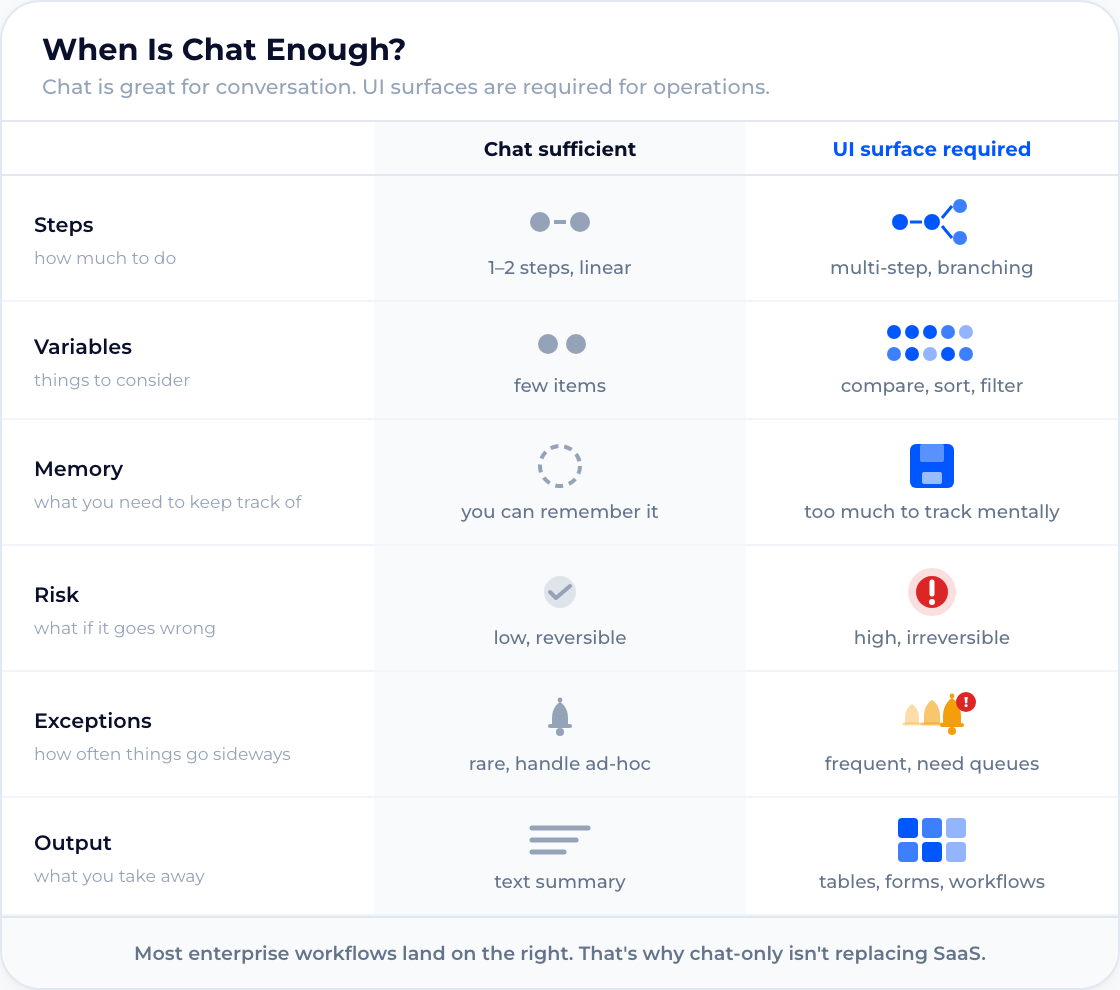

Chat is excellent at what chat does. Ad-hoc Q&A, ideation, unstructured search, first-draft generation, exploratory analysis where the user doesn’t know the question yet. For all of these, a text prompt is the fastest path to orientation. Low-stakes, open-ended, single-turn: chat wins.

But the moment a workflow requires multi-variable comparison, persistent state across steps, or real-time exception handling, chat hits a structural wall. The issue isn’t that chat is bad. The issue is that chat-only is being asked to replace interfaces it was never designed to match.

The downgrade problem

SaaS works because of bandwidth. A dashboard puts forty data points in front of you simultaneously. Color encodes urgency. Spatial position shows relationships. Outliers are visible before you read a single label. The human visual system processes this in parallel (scanning and comparing) within a fraction of a second.

Text is sequential. One sentence after another. A chat window forces you through that sequence, and every new message pushes the previous answer off-screen. Comparing two numbers that a table would display side by side becomes a scrolling exercise. Cognitive fit theory, established by Vessey in Decision Sciences and validated across decades of HCI research, explains why: graphical representations outperform tables for spatial tasks because visual processing runs in parallel where text forces serial decoding.2

This isn’t a UX preference. It’s a constraint of how human cognition processes information, what cognitive science calls extraneous load: the mental effort spent navigating an interface rather than understanding the data. A well-designed dashboard minimizes that load. A chat window maximizes it.

The history of computing proves the pattern. Ledger books yielded to spreadsheets. Command lines yielded to GUIs. Printed reports yielded to interactive dashboards. Each transition increased information density per unit of time. Chat-only AI reverses the trajectory. It takes the richest AI capabilities and delivers them through the narrowest output channel. That might be a first: a technology generation that ships with less output bandwidth than the one it replaces.

This applies to AI output directly. A smarter model producing a better paragraph is still a paragraph. Trend identification, outlier detection, multi-variable comparison. These tasks require spatial processing that text cannot deliver. Smarter models don’t fix the output bottleneck. Better interfaces do.

SaaS gave users dashboards, drill-downs, and governed workflows. Chat-only agents took all of that away and replaced it with paragraphs. Call it what it is: a regression dressed up as disruption.

We’ve run this experiment before. Early software was a command line: powerful, but sequential, memory-heavy, and hostile to non-experts. GUIs won because they externalized state — lists, windows, tables, constraints — and turned “remember the command” into “see the options.” Chat-only agents are a partial rewind. They re-encode state into paragraphs and put the burden back on the user to compare, filter, validate, and decide. The next interface shift isn’t “chat everywhere.” It’s agent-driven decision surfaces where the model proposes structured state, the user inspects it visually, and actions execute through governed controls.

The industry sees the gap

Count the initiatives. Google published A2UI in December 2025, a declarative specification where agents describe UI components that clients render natively.3 Open-JSON-UI (OpenAI’s declarative Generative UI schema, now community-adopted via CopilotKit4) offers a parallel declarative spec for structured widget output. CopilotKit, LangGraph, and CrewAI built AG-UI, an event-driven transport protocol for real-time agent-to-frontend communication. Vercel shipped AI SDK 6.0 as an agent-first framework with built-in tool approval gates. Anthropic’s MCP connects agents to external tools and data. Microsoft’s Copilot Extensions open a marketplace for third-party integrations.

Six initiatives from six different organizations, all launched or matured within the last 12–18 months. They address different layers of the stack: tool and data connectivity (MCP), UI rendering formats (A2UI, Open-JSON-UI), transport (AG-UI), and developer control gates (Vercel AI SDK). But they share three principles that reveal the direction:

- Streaming over request-response. Agents emit events in real-time rather than returning a single text block. The UI updates as the agent reasons.

- Declarative UI over generated code. Agents describe what to render from a pre-approved component catalog. The client decides how. This is a security decision as much as a UX one: declarative specs can be validated, sandboxed, and audited before anything reaches the screen. Generated code can’t.

- Human-in-the-loop as a primitive. Every major framework now includes first-class support for pausing agents and requiring user approval before execution. Not as an add-on. As a core architectural pattern.

The formula: declarative component catalogs + safe transport + approval gates = governance-ready UI rendering.

This is not three companies experimenting. It’s an industry-wide convergence on the same conclusion: chat-only agents can’t replace SaaS. Agent-native interfaces can.

What exists today: cards, buttons, basic widgets. The published use cases reveal who they’re built for. A2UI’s demos render product cards, visual search results, shopping previews. Consumer scenarios where governance is irrelevant. The enterprise incumbents offer more, but within walls. Microsoft’s Dynamics Copilot renders Adaptive Cards with structured inputs and approval buttons, yet no interactive charts and no custom components. SAP Joule shows Fiori Cards with drill-down, real visual density, but locked to SAP data, locked to Fiori Patterns, and built on a UI framework that predates AI-generated interfaces by a decade. Salesforce Agentforce has the most powerful widget toolkit of the three, but it’s blind to anything outside the CRM. Each platform solved its own silo. None of them solved the cross-system problem.

What enterprise users actually need is structurally different from all of the above: interactive, data-dense decision surfaces. Filterable tables with drill-down, approval workflows with audit trails, dashboards with real-time data feeds, forms that validate against business rules before submission. The enterprise governance layer (access control, corporate identity, cross-system data access, domain ownership) sits on top of all these protocols. None of the current frameworks deliver it.

That gap is structural, not a matter of iteration speed.

What the SaaS replacement looks like

Nadella’s vision isn’t wrong. The replacement for SaaS just isn’t a chat window. It’s a decision surface: a UI that renders model output as structured state and affordances: parallel inspection, governed execution, and an audit trail in a single view. Where chat produces answers, a decision surface produces actionable state.

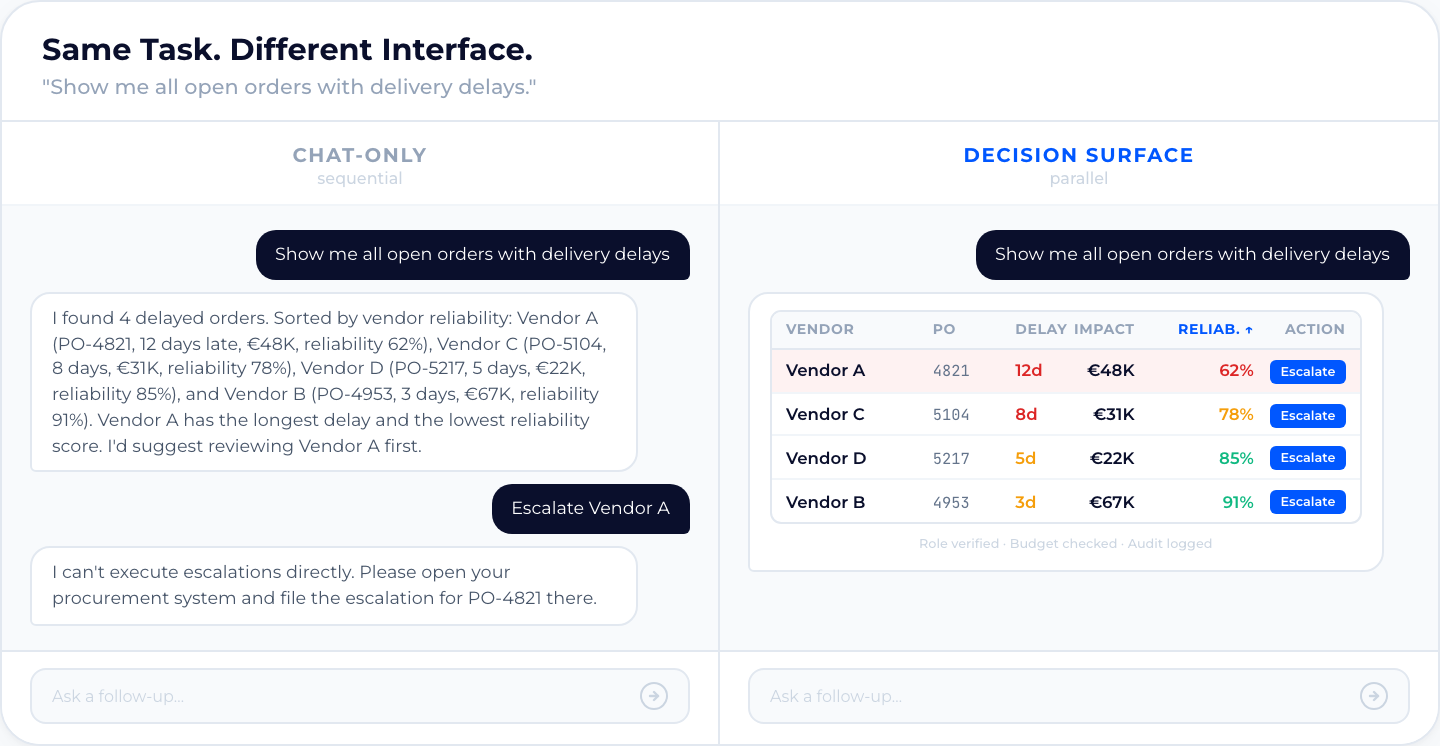

Consider procurement. Today, handling delayed orders through a chat-only AI:

- Ask: “Which orders are delayed?”

- Read a text list of vendor names and dates

- Copy the information into Excel

- Manually compare vendors, filter by impact

- Draft an approval request in a separate system

- Log the decision in another system

Six steps. Three tool switches. Zero audit trail.

The approval request lands in a director’s inbox with a pasted chat excerpt as the only supporting document. The director asks for the vendor comparison. The procurement lead rebuilds it from memory. What doesn’t survive the rebuild: the 3-way match on one vendor failed. Supplier name on the invoice didn’t match the PO, cost center field blank. In SAP, that’s a blocked payment. In a chat transcript, it’s paragraph three.

A decision surface compresses this into one view: a filterable table of delayed orders, sortable by financial impact or supplier reliability. Inline vendor comparison. An approval button that triggers a governed workflow. Role-based access control checks the user’s authority. Validation rules verify budget limits. The system generates an audit record automatically. The user doesn’t read an answer. They act on one.

The pattern repeats across domains. A finance controller asks “Which cost centers exceeded budget this quarter?” Chat returns a paragraph with five cost center names, three percentages, and a suggestion to “investigate further.” Nine times out of ten, “investigate further” means a pivot table. A decision surface shows a variance heatmap: red cells for overruns, sorted by magnitude, with drill-down into line items and an inline reforecast trigger. The controller acts in the same view where they spotted the anomaly. No copy-paste. No pivot table detour.

That gap — the distance between asking a question and running a business process — is what SaaS closed. Chat-only pried it back open.

Why enterprise can’t wait for the platforms

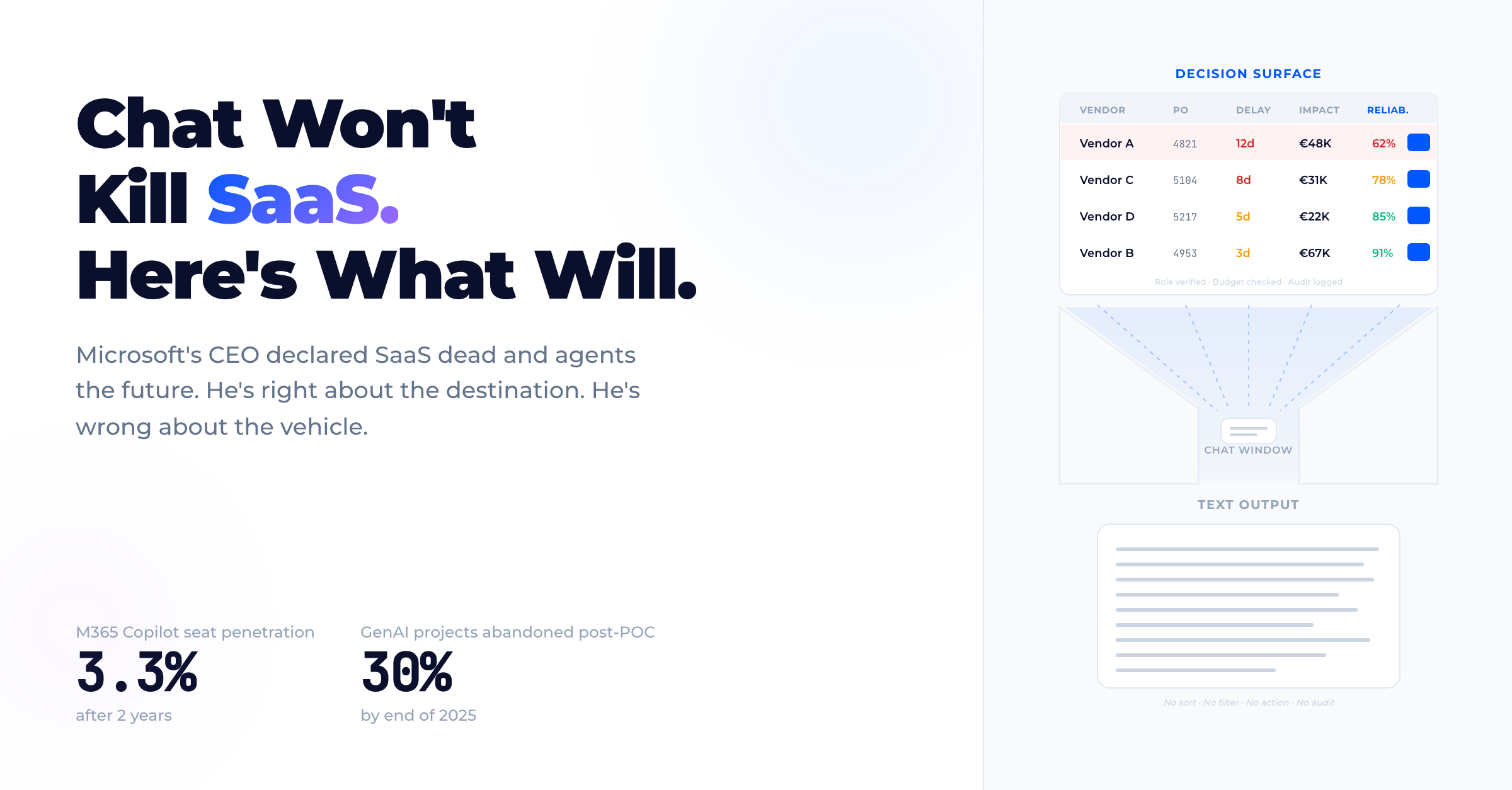

~15 million paid M365 Copilot seats across an estimated ~450 million commercial Microsoft 365 seats, roughly 3.3% seat penetration after ~2 years (Microsoft Q2 FY26 earnings, January 28, 2026).5 Many try it; conversion remains low.

The adoption gap has a structural cause. The average enterprise manages ~900 applications, of which only 29% are integrated (MuleSoft/Salesforce, 2025 Connectivity Benchmark). 95% of IT leaders identify this fragmentation as the primary barrier to AI adoption.6 Chat-only AI can’t bridge that gap. It answers questions about data it can access, but it can’t render cross-system views, trigger governed workflows, or connect the decision to the execution. A text response to “show me delayed orders” is a step backward from the SAP transaction the user already had.

Nobody loved that SAP transaction. But it worked.

The ROI data confirms this. Gartner projects that 30% of generative AI projects will be abandoned after proof of concept by end of 2025.7 RAND puts the broader AI failure-to-production rate above 80%. The exceptions share a pattern: an IDC study of 4,000+ business leaders found an average 3.7x return on AI investment, but organizations with mature data integration reach 10.3x, realized within 13 months.8 The gap isn’t smarter models. It’s integration depth: isolated AI answers questions in a silo. Integrated AI changes how decisions get made. That requires interfaces that carry structured data, not text. No one ships a governed workflow as a paragraph.

This is a universal problem. Every enterprise in a regulated industry (finance, healthcare, manufacturing, government) already has BI dashboards and reporting tools. What they don’t have: agent-driven workflows where AI proposes actions, the user inspects them visually, and execution runs through access controls, validation rules, and audit trails in a single surface. Chat-only AI skips all of that. In regulated markets the gap is sharper. GDPR and the EU AI Act require sovereign, auditable architectures. A procurement team running SAP needs an audit trail that satisfies their regulator, not a chat transcript. But the core requirement is the same everywhere: governed decision surfaces between AI and the user. Compliance isn’t a regional add-on. It’s an architectural constraint that shapes the entire stack.

The two-layer test

To replace SaaS, an AI agent must match what SaaS already delivers. Two layers determine whether the interface fits the task. Chat-only typically fails both.

1. Information density. Forty data points rendered simultaneously, not sequentially. Tables, charts, dashboards with visual encodings (color, position, size) that carry meaning without a single word of explanation. Observable requirements: filterable tables, sparklines for trend detection, cohort comparison in a single viewport. Decision surfaces process information in parallel. Text processes it in series.

2. Actionability. Filter, sort, drill down, approve, reject, escalate. Every user action triggers a governed workflow with access control, validation rules, and an audit trail. Observable requirements: one-click approval with policy check, inline form validation against business rules, status transitions with role-based gates. Text describes actions. Interfaces execute them within the rules.

A note on governance. Governance — access control, audit trails, approval workflows — is required regardless of interface. A chat agent can enforce RBAC and log decisions just as well as a dashboard can. Governance is not a reason to choose UI over chat. But governance needs to be visible. When an auditor asks “show me the approval chain for this vendor switch,” a chat transcript is not an inspectable control. Structured UI makes governance state legible: who approved, when, under which policy, with what data. Varonis analyzed nearly 10 billion files across 1,000 organizations and found that 99% have sensitive data exposed to AI; 90% have sensitive files accessible to every employee through tools like Copilot.9 Deloitte’s 2026 “State of AI in the Enterprise” survey of 3,235 leaders across 24 countries found that only 21% of organizations have a mature governance model for autonomous AI agents.10 The governance layer isn’t optional. It’s the prerequisite for enterprise trust. But it’s an architectural requirement for any agent interface, not a differentiator between chat and UI.

Information density and actionability. These are the two layers where the interface makes the difference. Traditional SaaS passes both. Chat-only passes neither. Chat plus structured UI plus workflow execution can pass, but that’s no longer a chatbot. That’s a decision surface. The gap isn’t cosmetic. It’s architectural.

What comes next

Nadella is right: the era of standalone SaaS applications with siloed data and rigid UIs is ending. But SaaS isn’t replaced by chat. It’s replaced by agent-native UI: declarative interface definitions that render into production-grade surfaces with governance built in.

The pieces exist: rendering specs, data access protocols, governance frameworks. They just haven’t been assembled into a single stack. That assembly is inevitable — the same way GUIs replaced command lines not because someone invented windows, but because the pieces (bitmapped displays, mice, event loops) converged until the old paradigm stopped making sense.

Chat is the command line of the AI era. The CLI didn’t disappear — power users still live in it. But it stopped being the mainstream interface the moment GUIs made state visible. The same transition is starting now. Decision surfaces will replace chat-only agents as the primary enterprise interface. The pace depends on one variable: how loudly procurement leads, finance controllers, and compliance officers refuse to work in paragraphs.

Sources

Footnotes

-

Satya Nadella on the BG2 podcast with Bill Gurley and Brad Gerstner, December 13, 2024. ↩

-

Vessey, I. (1991). “Cognitive Fit: A Theory-Based Analysis of the Graphs Versus Tables Literature.” Decision Sciences, 22(2), 219–240. ↩

-

Google Developers Blog, “Introducing A2UI: An open project for agent-driven interfaces,” December 15, 2025. ↩

-

Open-JSON-UI specification, CopilotKit documentation. Open standardization of OpenAI’s declarative Generative UI schema. ↩

-

Microsoft Q2 FY26 Earnings, January 28, 2026. ~15 million paid M365 Copilot seats vs. ~450 million commercial M365 seats = ~3.3% seat penetration. ↩

-

MuleSoft/Salesforce, “2025 Connectivity Benchmark Report,” survey of 1,050+ IT leaders. ~900 applications per organization, 29% integrated, 95% cite integration as a barrier to AI adoption. ↩

-

Gartner, “Gartner Says 30% of Generative AI Projects Will Be Abandoned After Proof of Concept by End of 2025,” press release, July 2024. RAND Corporation, Ryseff et al., “The Root Causes of Failure for Artificial Intelligence Projects and How They Can Succeed,” 2024. ↩

-

IDC, “2024 AI Opportunity Study” (sponsored by Microsoft), survey of 4,000+ business leaders. Average 3.7x return on AI investment; top performers with mature integration reach 10.3x. ↩

-

Varonis, “2025 State of Data Security Report: Quantifying AI’s Impact on Data Risk.” Analysis of nearly 10 billion files across 1,000 organizations. ↩

-

Deloitte, “State of AI in the Enterprise,” 7th edition, January 2026. Survey of 3,235 business and IT leaders across 24 countries: only 21% report a mature governance model for autonomous AI agents. ↩